Rolling Aggregations for Real-Time AI

The Queen of AI Features for Real-Time AI systems

Rolling aggregations keep behavioral and anomaly signals fresh for truly interactive AI. Modern approaches incremental views and parallel pushdown aggregations make them scalable and low latency. With Hopsworks, teams can choose shift-left (Feldera) or shift-right (RonDB) to balance simplicity and sub-millisecond freshness in production.

TL;DR

Rolling aggregations are among the most useful input data for AI systems, enabling behavioral change detection and anomaly detection in real-time. They capture recent trends and patterns in a compressed representation, enabling interactive AI systems to meet the lowest latency requirements for feature freshness. Rolling aggregations are used by both real-time ML systems (e.g., credit card fraud and personalized recommendations) and interactive agents (e.g., compressed user history/behavior or summary of recent activity).

Rolling Aggregations - the Queen of AI Aggregations

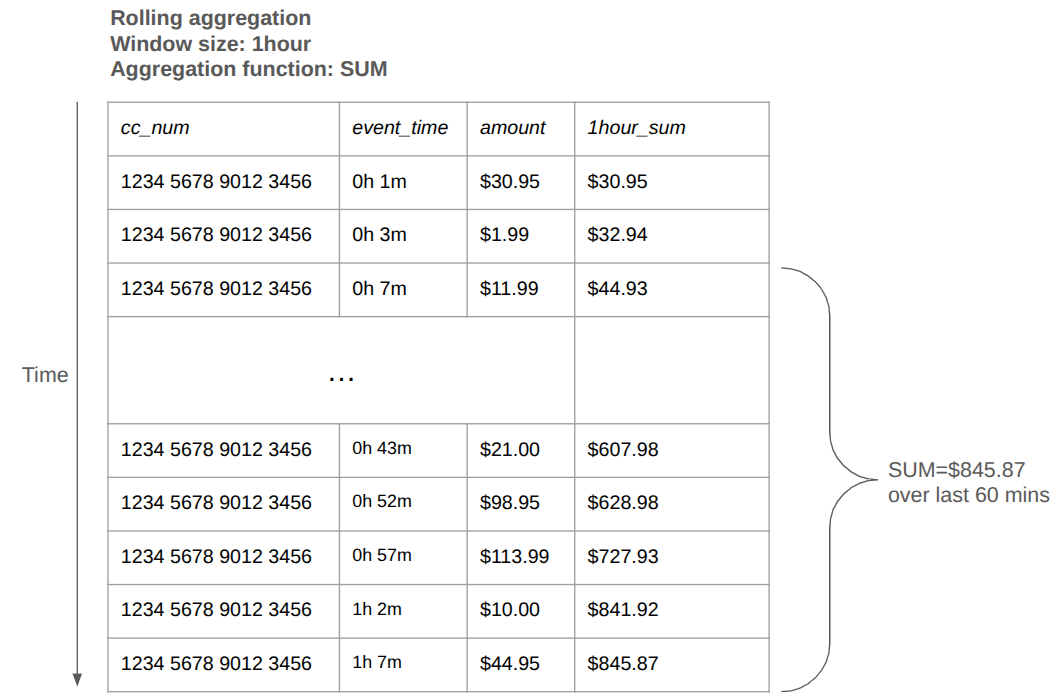

A rolling aggregation computes statistics over a continuously shifting window of data. You aggregate over the last N values or time period at each point.

Figure 1. A rolling aggregation computes an aggregation function (e.g., SUM, AVG, MIN, MAX, STDDEV, etc) over a window size of time-series data (e.g. last hour).

Traditional windowed aggregations in stream processing (e.g., Apache Flink) are not designed to compute rolling aggregations, as they are computationally expensive - for every new event, you have to recompute the aggregate over all N events in the bucket. Instead, they use tumbling or hopping (sliding) windows to group data into windows of time, such as the last 10 minutes or hour, and compute aggregations over those windows.

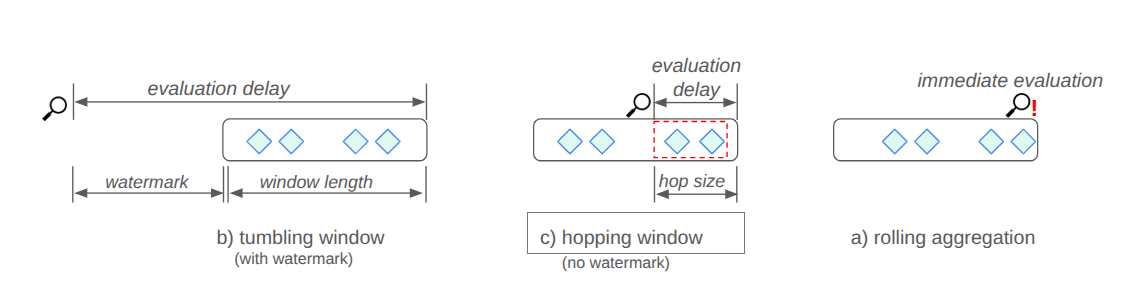

Figure 2. Tumbling windows and hopping windows introduce a delay between when an event arrives and when an aggregation result is computed. Rolling aggregations are computed immediately when the event arrives.

Figure 2 shows how tumbling windows only output aggregations after the window length and watermark has passed, while hopping windows wait until the hop size has passed. While tumbling and hopping windows make computing aggregations over streaming data computationally tractable, they introduce latency. The output aggregations are as stale as the window length + watermark or the hop size. In AI terms, the feature data they output is stale. Stale data means your interactive AI applications will not be intelligent, just laggy. In contrast, rolling aggregations output results immediately when a new event arrives, producing fresh feature data.

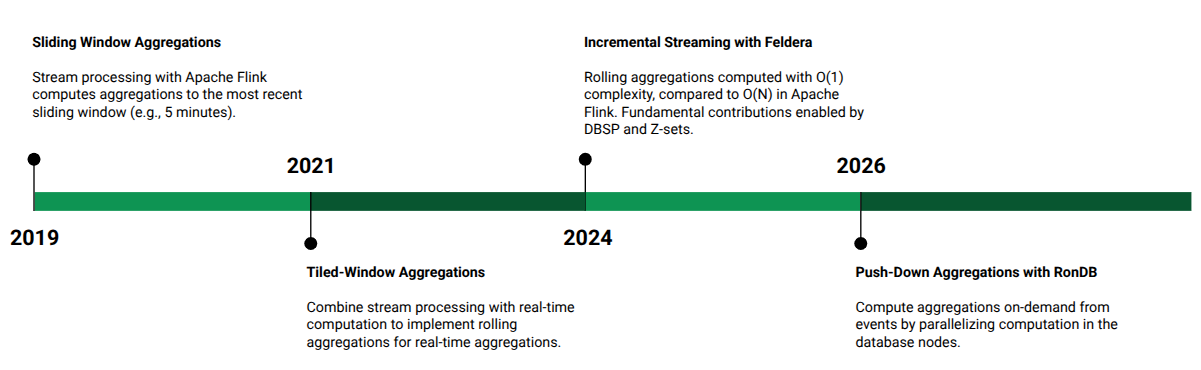

Figure 3. Brief history of increasing feature freshness for rolling aggregations.

In Figure 3, you can see a brief history of the journey from tumbling/hopping windows to solutions for computing rolling aggregations. The first adopted approach was tiled window aggregations that combined stream processing with further computation at request-time. A lower cost solution was developed by Feldera recently based on incremental views for streaming processing. And recently, with RonDB, we developed native database support for parallel processing of aggregations - avoiding the need for stream processing. We now describe these approaches.

Shift Left and Shift Right with Tiled Window Aggregations and Chronon

AirBnB’s Chronon framework provided the first novel solution to reduce the computational overhead of computing rolling aggregations with an approach called tiled time window aggregations. Tecton Rift (based on DuckDB) and Chalk.ai (based on Apache Velox) also provide variants on this solution for scalable rolling aggregations.

Say you want to compute a precise 240 hour aggregation, you could decompose the events into 24-hour tiles, computed daily at 12am. The idea is that you can compute the 240 hour aggregation by composing partial aggregates for the 24-hour tiles. This works trivially for some aggregations (e.g., min, max), but requires maintaining additional state for others (e.g., mean).

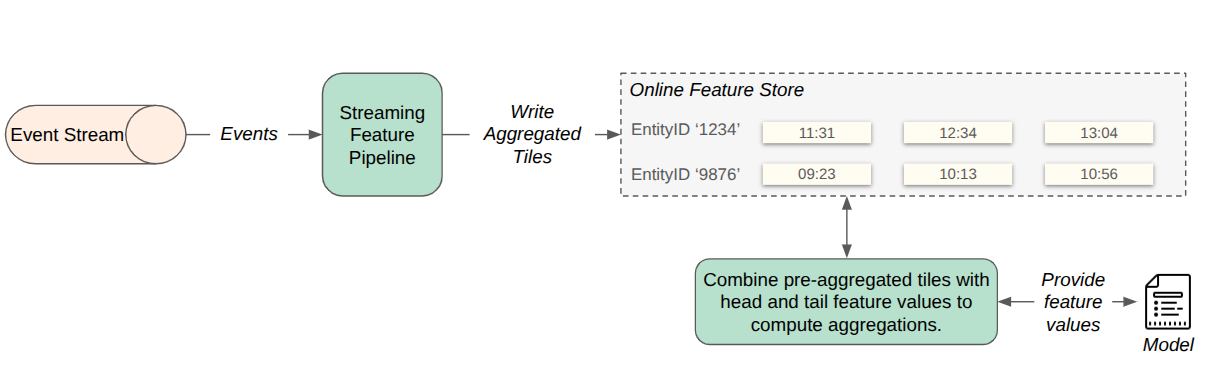

Now imagine, a request arrives to compute a rolling aggregation at 1pm. Your tiles are only from 12am to 11.59pm. You will not have yet computed a tile for the current day’s events (from 12am-1pm) and you won’t have a tile for the events from 1pm of the last day in the interval (the tile for that day contains events not included in the interval). These events that lie outside the tiles are called head and tail events, respectively. Tiled window aggregations are computed by composing the partial aggregates with on-demand aggregations over the unaligned head/tail events.

Figure 4. Tiled aggregation combines precomputed partial aggregates (tiles) with on-demand computation to compose aggregations from both partial aggregates and recent events (head/tail events that are not included in a tile).

More specifically, tiles partition a window of length N into M tiles, where M<<N. Stream or batch processing computes the partial aggregates over the M tiles, and the final aggregation result is composed from the M partial aggregate values along with unaligned head/tail events at the start and end of the window interval, respectively. Tiled aggregation has high operational overhead as it requires both shift left computation and shift right computation, along with storing all events that may be needed for head/tail computations. Incremental views transform the problem into a fully shift-left solution with lower computational complexity.

Shift Left with Incremental Views and Feldera

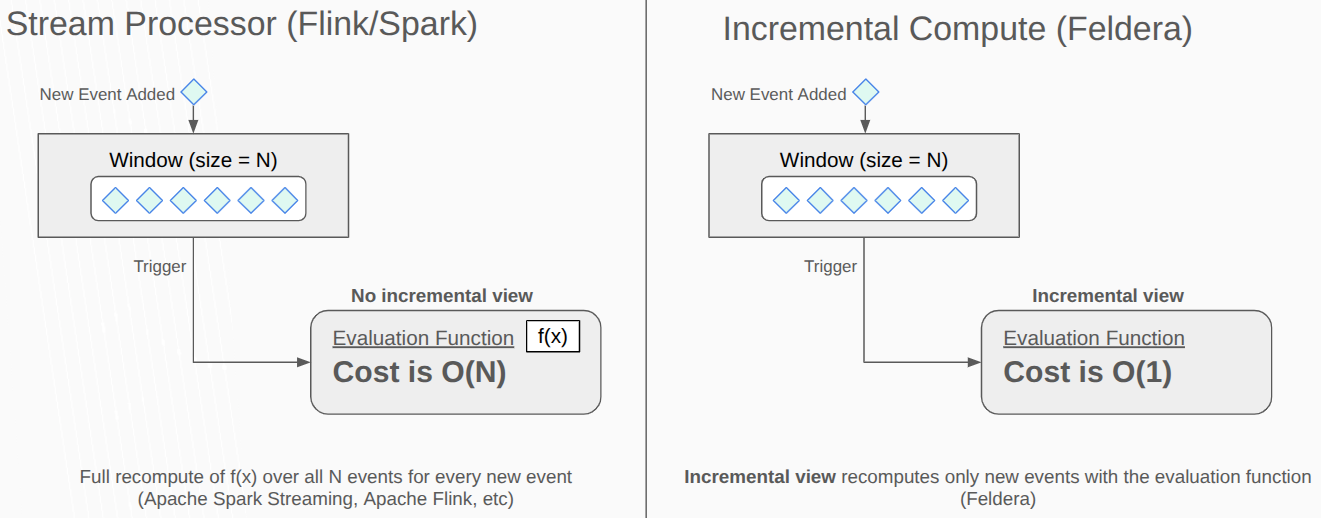

Rolling aggregations can be computed in stream processing systems such as Apache Flink with OVERaggregates. However, even though Apache Flink’s OVERaggregates can be partitioned over many workers, they do not scale well with increasing window size and increasing event throughput, as every new event triggers the recalculation of the aggregation function, and its computational cost is proportional to the window size, see Figure 5.

Figure 5. Incremental compute engines transform rolling aggregation computations from an O(N) computational complexity to an O(1) computational complexity problem.

Incremental views solve the scalability challenge by avoiding full recomputation of aggregations when a new event arrives. Instead, they reuse the previously computed value and apply only the changes introduced by new or removed events. As a result, the work performed is proportional to the input/output changes, not the window size.

Feldera is the leading open-source incremental compute engine. You write rolling aggregations in SQL and it is transpiled into rust code for high performance. Feldera supports incremental view maintenance through its streaming engine DBSP (DataBase inspired by Signal Processing). DBSP implements incremental views using Z-sets, a generalization of relational sets that track not only which elements are present, but also how their counts change over time. In a traditional relational set, an element either exists (count = 1) or does not exist (count = 0). In a Z-set, each element has an integer count that can be positive, zero, or negative:

- Positive counts represent insertions (adding events).

- Negative counts represent deletions (removing events).

This allows Z-sets to represent deltas, the net change between two states, without storing the full state at each step.

Shift Right with Parallel Pushdown Aggregations in Hopsworks/RonDB

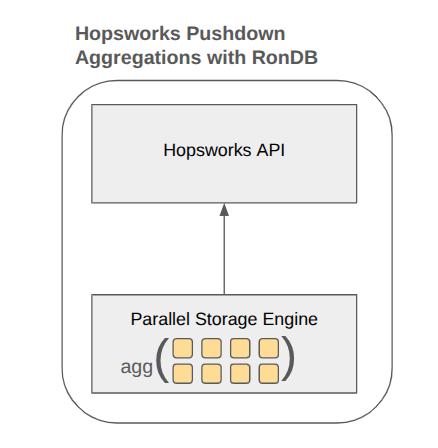

Many organizations try to avoid introducing stream processing to their architecture, as it introduces operational complexity and cost. If your data volume and velocity are not at hyperscaler levels, you can stream raw events into Hopsworks online database, RonDB, and when clients request aggregate computations, they are computed on-demand in parallel in the database nodes in RonDB.

Figure 6. RonDB pushes down aggregation computation to data nodes that compute them in parallel.

Aggregations can be combined (and pushed down to database nodes) with other SQL operations in the same query, such as index scans, LIMITs, and projections. Other feature computation engines, that are built on DynamoDB and Redis, can also compute aggregations on-demand, but they are limited by the database capabilities. For example, in Redis, clients have to read all the columns and all the rows in the table (as it does not support predicate pushdown or projection pushdown). In contrast, in RonDB, only the columns returned to the client from the table are read and predicates (e.g., only computed for users based in the ‘EU’) are applied in the database kernel before the aggregation is computed. The result is orders of magnitude lower latency and higher throughput for selective queries.

RonDB Pushdown Aggregation Performance Benchmark

Benchmark Setup

- 2 Data nodes: c6i.4xlarge (16c32g)

- 1 Rdrs: c6i.4xlarge (16c32g)

- 1 Benchmark Client: c6i.8xlarge (32c64g)

Methodology

- RonDB Pushdown Aggregation via NdbAggregator C++ API

- Connections: 4 Ndb_cluster_connection objects, 32 workers distributed round-robin (8 per connection)

- Timing: std::chrono::steady_clock with nanosecond precision per query

- Warmup: 5 seconds discarded before each measured 15-second window

- Data: Random values — val1/val2 BIGINT, val3/val4 DOUBLE, filter_date DATE (2024-01-01 to 2024-12-31)

Five tables with identical schema, varying in row count: 100, 500, 10K, 100K, 500K.

CREATE TABLE bench_qps_{N} (

id INT NOT NULL AUTO_INCREMENT,

val1 BIGINT NOT NULL, -- random 0–999999

val2 BIGINT NOT NULL, -- random 0–999999

val3 DOUBLE NOT NULL, -- random 0–1000

val4 DOUBLE NOT NULL, -- random 0–1000

filter_date DATE NOT NULL, -- random in 2024-01-01 to 2024-12-31

padding VARCHAR(100) NOT NULL, -- 80-char random string

PRIMARY KEY (id),

INDEX idx_date (filter_date) -- ordered index, used by Q4

) ENGINE=NDB;- val1, val2: Integer columns for SUM() aggregation

- val3, val4: Floating-point columns for AVG(), MIN(), MAX(), and expression aggregation (val1 * val3, val3 + val4)

- filter_date: Uniformly distributed across 2024; Q4 filters >= '2024-07-01' (~50% selectivity)

- padding: Simulates realistic row width (~130 bytes per row)

Q1: Simple Aggregation

SELECT COUNT(*), SUM(val1), SUM(val3), COUNT(val3) FROM tableQ2: Multi-Aggregate (6 aggregations)

At 10K rows, PA continues to perform well, but other engines would transfer all rows to compute 6 aggregates, while PA computes them at the data node in a single pass.

SELECT COUNT(*), SUM(val1), SUM(val2), SUM(val3), COUNT(val3), MIN(val4), MAX(val4) FROM tableQ3: Expression Aggregation

Arithmetic expressions (val1 * val3, val3 + val4) are computed directly on data nodes. This is an example of a large table where scan I/O dominates over computation.

SELECT COUNT(*), SUM(val1 * val3), SUM(val3 + val4), COUNT(val3 + val4) FROM tableQ4: Filtered Aggregation (Index Scan)

SELECT COUNT(*), SUM(val1), SUM(val3), COUNT(val3) FROM table WHERE filter_date >= '2024-07-01'Benchmark Results for Q1-Q4

Table 1 below shows experimental performance for our benchmark with Q1-Q4, while varying the number of rows each aggregation is computed over. For each query and number of rows per aggregation, we measure throughput in queries per second (QPS) and latency.

| Query | 100 QPS | 100 Latency (ms) | 500 QPS | 500 Latency (ms) | 10000 QPS | 10000 Latency (ms) | 100000 QPS | 100000 Latency (ms) | 500000 QPS | 500000 Latency (ms) |

|---|---|---|---|---|---|---|---|---|---|---|

| Q1 Simple Aggregation | 38813 | 0.9 | 24528 | 1.5 | 2274 | 17.3 | 177 | 217.8 | 45.1 | 658 |

| Q2 Multi Aggregation | 40623 | 0.8 | 23228 | 1.6 | 2057 | 18.9 | 148 | 267.6 | 32.2 | 1212 |

| Q3 Expression Aggregation | 40949 | 0.8 | 23073 | 1.6 | 2116 | 18.6 | 142 | 273.4 | 31.1 | 1266 |

| Q4 Filtered Aggregation | 31524 | 0.2 | 26205 | 0.3 | 4540 | 1.9 | 418 | 19.4 | 58.3 | 152.6 |

Table 1. Benchmark results for Queries 1-4, varying the number of rows aggregations are computed over, and measuring latency and throughput in queries per second (QPS).

From the results with only 2 data nodes (RonDB can scale up to 144 data nodes), you can see that the latency for Q1-Q3 increases when the number of rows for an aggregation reaches 10,000. For Q4, however, latency stays very low (1.9 milliseconds), as this query includes an index scan that pushes both range filtering and aggregation to the data nodes, eliminating row transfer entirely, and keeping latency low. The implication is that if you have aggregations that also include filters, you can scale pushdown aggregations to much higher performance on the same hardware.

Comparative Guide for Rolling Aggregation

Table 2 provides an overview of the architectural design (shift-left or shift-right) and computational complexity of the technologies presented here.

| Shift Left (Streaming) | Shift Right (Inference Time) | |

|---|---|---|

| Apache Flink | O(N) bucket size | - |

| Tiled Windows | O(N/t) bucket size, where t is the number of tiles | O(t) + O(m), where t is the number of tiles and m is the number of head and tail events |

| Feldera | O(1) bucket size | - |

| Hopsworks with RonDB Pushdown Aggregations | - | O(N/r) compute cost with r database node threads and O(1) network I/O from the DB. |

| Client-Side Aggregations | - | O(N/r) with r client-side threads and O(N) network I/O from the DB |

Table 2. Different technologies for computing rolling aggregations. Shift-left precomputes rolling aggregates. Shift-right computes them on-demand. The computational complexity of each technology is also shown.

From the Table, it is clear that Feldera is the winner for shift-left rolling aggregations, and RonDB outperforms naive on-demand computation of aggregates, by moving aggregate computation into the database, reducing network transfer from the database.

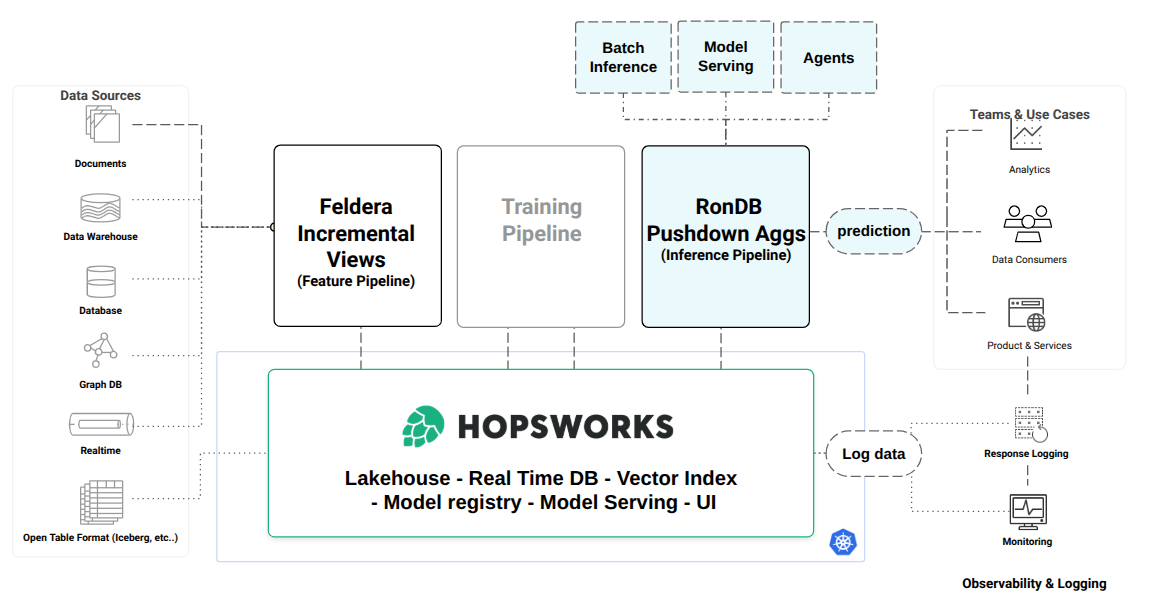

Shift Left or Shift Right Rolling Aggregates in Hopsworks

Hopsworks is an open compute platform, where you can run your pipelines either inside or outside Hopsworks. Depending on your requirements, you can choose either shift-left or shift-right rolling aggregations.

For shift-left, Hopsworks has first class integration with Feldera, but you also bring your own Apache Flink platform if you prefer, or even Spark Streaming. Streaming pipelines compute rolling aggregates on event data and store the aggregates in Hopsworks for serving in RonDB at sub-ms latency. Hopsworks also materializes the aggregates to its offline store (lakehouse tables), for later use as training data or historical context data for agents.

For shift-right, you define rolling aggregations as SQL transformations that are applied consistently in training and inference pipelines without skew. In training pipelines SQL transformations are applied by Hopsworks Query Service or SparkSQL, depending on whether it is a Python program or Spark program, respectively. If you do not want the complexity of a stream processing engine, you should use RonDB if it scales to meet your latency and throughput requirements.

Summary

Rolling aggregations are key to capturing behavior and anomaly signals fresh enough to build truly interactive AI systems. The first scalable solution to computing rolling aggregations, tiled-window aggregations, has been superseded by incremental views. And now, with parallel pushdown aggregations, larger data volumes can be computed on-demand.

Hopsworks supports both shift-left rolling aggregations, with Feldera’s efficient incremental views (O(1) updates), and shift-right with RonDB’s parallel pushdown aggregations. Hopsworks give teams the flexibility to choose from simplest the operations (RonDB) to the largest scale with sub-millisecond freshness (Feldera).