Data/AI Platforms should be “Open” for use by increasingly specialized Compute Engines

The future of Data/AI platforms isn’t a single universal compute engine, it’s an open ecosystem of specialized engines working on the same data platform.

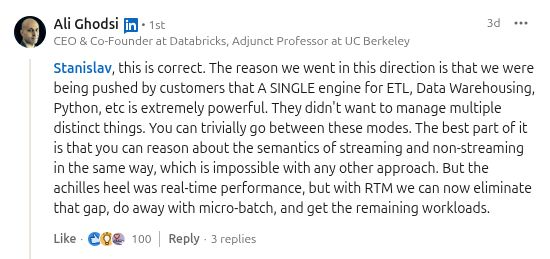

I think Ali Ghodsi is wrong here. He is claiming that the direction of travel is to a single engine (Spark) for batch, Python, and streaming workloads.

What happened with increasingly specialized databases appearing from around 2010 is now happening for compute engines. History is repeating itself. Twenty years ago, you could have almost any kind of database you wanted, so long as it was relational and could be accessed through ODBC/JDBC. Over time, though, scale and workload diversity broke that simplicity. As data volumes grew and enterprise use cases became more specialized, a wide range of storage engines became commercially viable and massively successful.

- Key-value stores such as DynamoDB for highly scalable operational workload.

- Graph databases such as Neo4j became important for relationship-heavy workloads.

- JSON-oriented databases, such as MongoDB, removed the need for Javascript applications to have a separate (ORM) backend.

- Search engines such as Elasticsearch carved out their own category based on inverted indexes.

- Time-series databases such as InfluxDB specialized around metrics and event data.

- Open table formats such as Iceberg, Hudi, and Delta created a new storage layer for large-scale analytical data.

- Event backbones such as Kafka became systems of record in their own right.

- Vector databases, such as Weaviate and Qdrant, supported approximate nearest neighbor search with embeddings.

- In-memory databases such as Redis dominate low-latency use cases.

- NewSQL systems such as RonDB pushed relational semantics into more distributed environments.

Yes, both MySQL and Postgres are still enormously important, and yes, Postgres can often be stretched into roles beyond its original design. But that is beside the point. The point is that the enterprise data stack no longer converged on a single database abstraction. Different storage engines now play important and durable roles for different workloads.

In 2026, something similar is happening with compute engines.

We are going through a Cambrian explosion in compute engines, much like the explosion in data stores around 2010. Spark is the modern-day version of ODBC/JDBC - the one compute engine that is supposed to rule them all. It is the dominant, scalable compute engine and the baseline against which new compute engines define themselves. But just as Postgres plugins did not obsolete Neo4j, InfluxDB, or Weaviate, Spark will not eliminate newer compute engines.

Instead, specialized engines are emerging for specific computational workloads:

- Apache Flink supports scalable per-event stream processing.

- Feldera supports incremental computation for real-time streaming workloads, such as rolling aggregations.

- Ray supports distributed Python and GPU-heavy workloads.

- DuckDB is a high performance single-host columnar SQL engine.

- Polars supports high performance single-node DataFrame workloads.

- Daft supports multimodal distributed data processing.

- DataFusion supports an embeddable Arrow-native query engine.

- RisingWave and Materialize support streaming SQL and incremental views.

The direction of travel is the same as with data stores. The promise of Spark is that compute workloads can be handled by one general-purpose engine. Now the workload landscape is broad enough, and the economics of scale are favorable enough, that many different engines can succeed at once. Some optimize for streaming, some for incremental updates, some for local analytics, some for Python ergonomics, some for distributed ML, some for embedded execution, and some for AI-native data types and pipelines.

That does not mean Spark is irrelevant. It means Spark is no longer the end state. Just as the database world evolved from “everything behind JDBC” to a rich ecosystem of purpose-built storage engines, the compute world is evolving from “everything through Spark” to a diverse ecosystem of purpose-built execution engines.

That is not fragmentation for its own sake. It is specialization driven by real workloads. And in the enterprise, specialization tends to win. Hopsworks is built with this vision in mind, with open data APIs at its core. You can run compute either inside Hopsworks or outside Hopsworks with your engine of choice. Data cannot be kept in a walled garden if it is to power an increasing number and variety of workloads in analytics and AI.