AI Lakehouse

TL;DR

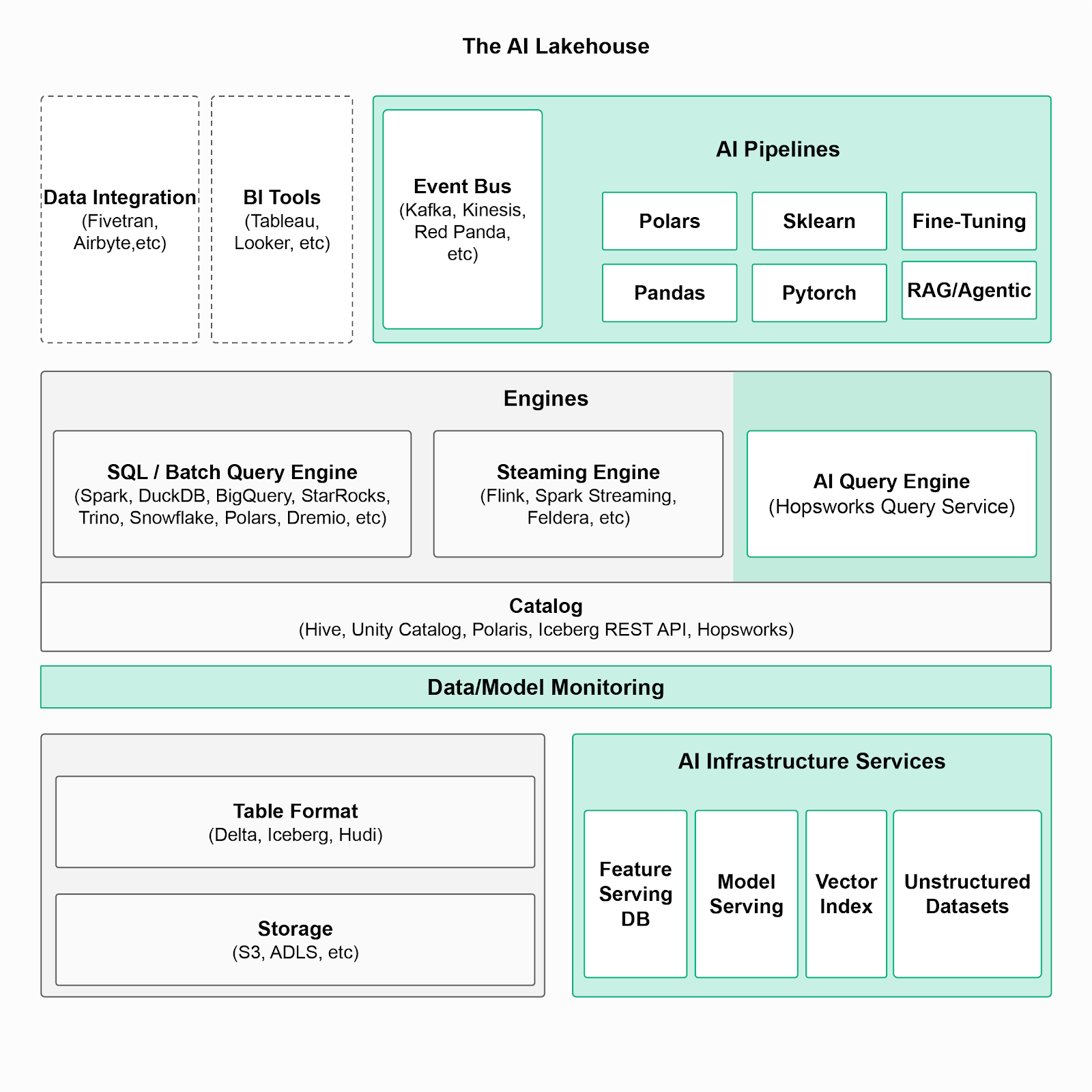

An AI Lakehouse extends traditional lakehouse architecture with infrastructure for production AI, including feature stores, real-time inference, model serving, and vector indexing. It unifies analytics and machine learning workloads on a single governed platform.

What is an AI Lakehouse?

An AI Lakehouse is an architectural paradigm that combines elements of data lakes and data warehouses to support advanced AI and machine learning (ML) workloads. This infrastructure approach allows organizations to manage vast amounts of structured and unstructured data while enabling AI and ML workloads on the same platform. The AI lakehouse supports building and operating AI-enabled batch, real-time and LLM powered applications.

What are the differences between a Lakehouse and an AI Lakehouse?

A traditional lakehouse focuses on analytics workloads by combining data lake storage with warehouse-style transactions and governance. An AI Lakehouse builds on this foundation by adding components required to develop and operate AI systems at scale.

The main difference between a Lakehouse and an AI Lakehouse lies in the specific infrastructure and capabilities they offer, particularly in relation to supporting artificial AI and ML workloads. A lakehouse is effectively a modular data warehouse that decouples the separate concerns of storage, transactions, compute and metadata. However, an AI Lakehouse extends this architecture by adding components specifically designed for AI/ML, such as an Online Store and Vector Index.

A Lakehouse provides:

- Decoupled storage and compute

- Transactional data management

- Metadata and governance

- SQL-first analytics workloads

An AI Lakehouse adds:

- Feature store (offline + online)

- Vector index for embeddings and RAG

- Model registry and serving

- Point-in-time query engine

- Monitoring and drift detection

- AI pipeline orchestration

The AI Lakehouse therefore builds on the lakehouse architecture and optimizes it for AI and ML applications allowing a more robust MLOps approach to the deployment and management of AI projects. Below, you can see Hopsworks’ AI Lakehouse architecture with the functionalities that are needed to build and operate AI systems and apply MLOps principles on Lakehouse data.

Figure: The AI Lakehouse Architecture

What capabilities are needed for an AI Lakehouse?

As shown in the figure above, certain capabilities are needed on top of a regular Lakehouse infrastructure to build and operate AI systems. These specific capabilities are present in Hopsworks AI lakehouse as the following:

- AI pipelines: AI pipelines are structured pipelines or processes involved in developing, deploying, and maintaining ML and AI models. AI pipelines used for AI systems fall into three different categories; feature, training and inference pipelines (FTI pipelines).

- AI query engine (Hopsworks Query Service): The original lakehouse infrastructure was built around SQL , their query engines do not support AI systems and Python. Hopsworks query service was built to solve this challenge and allow high speed data processing from the Lakehouse and meet AI-specific requirements; such as the creation of point-in-time correct training data and the reproduction of training data that has been deleted.

- Catalog(s) for AI assets and metadata: Assets include feature/model registry, lineage and reproducibility.

- AI infrastructure services: AI infrastructure services include model serving, a database for feature serving, a vector index for RAG, and governed datasets with unstructured data.

Why an AI Lakehouse Matters

An AI Lakehouse unifies data and AI infrastructure to support real-time, batch, and LLM workloads on a single governed platform as it:

- Eliminates fragmentation between data and ML systems.

- Supports LLM-powered and retrieval-augmented applications

- Reduces operational complexity

- Aligns lakehouse architecture with MLOps best practices

FAQs:

When should you adopt an AI Lakehouse?

You should consider adopting an AI Lakehouse when you are building production ML systems and require

- Consistent training and real-time inference

- Deploying LLM or RAG applications

- Governance, monitoring, and feature reuse

For small-scale experimentation, a basic lakehouse may be sufficient. At scale, an AI Lakehouse simplifies infrastructure and reduces architectural fragmentation.

When should an organization move from a Lakehouse to an AI Lakehouse?

Organizations should consider transitioning to an AI Lakehouse when analytics workloads evolve into production AI systems requiring real-time inference, feature reuse, governance, monitoring, and model lifecycle management. An AI Lakehouse reduces fragmentation between data and ML infrastructure.

Does an AI Lakehouse replace a feature store?

No. An AI Lakehouse incorporates a feature store as a core component. The feature store provides offline and online feature management, while the broader AI Lakehouse architecture integrates additional components such as vector indexing, model serving, and AI-specific query engines.

Visit the Hopsworks AI Lakehouse page