Online-Offline Feature Store Consistency

What is online-offline consistency in a feature store?

Features that are stored in both the online and offline stores should be consistent. A replication protocol with consistency guarantees (strong consistency, eventual consistency,...) that the feature data that should be stored in both online and offline stores is kept in sync.

What is an example of an online-offline feature store consistency protocol?

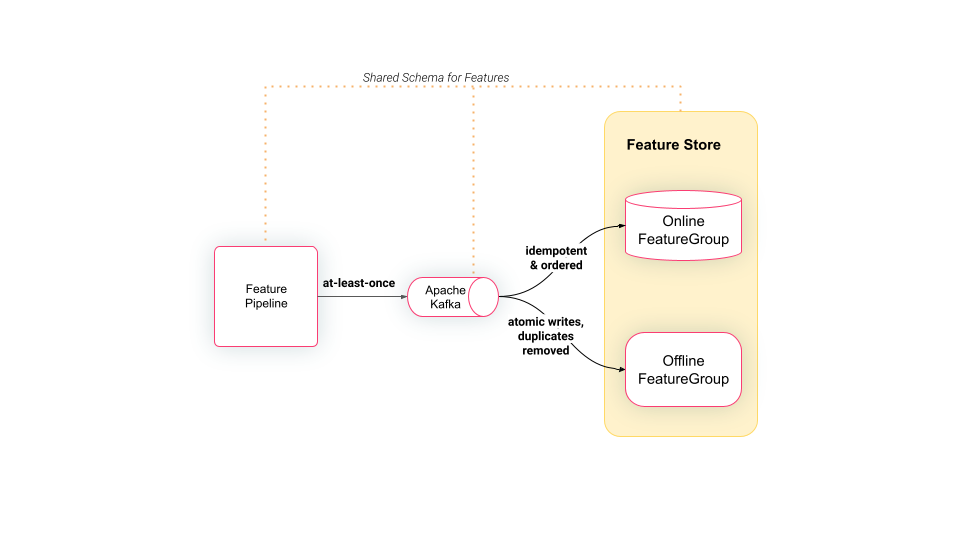

Hopsworks is an open-source feature store, where feature pipelines write features to a topic in Kafka. The topic in Kafka has an Avro schema and both the online and offline stores have the same schema.

Internal services in Hopsworks copy the data in the Kafka topic to the tables in the online and offline stores, ensuring eventually consistent feature data in the online and offline stores. Correctness is ensured by Kafka providing at-least-once guarantees when the data is written to the Kafka topic. After the data has been acknowledged as written to Kafka, duplicates may have been introduced to the feature data. The connector that writes to the online store will overwrite duplicate values, ensuring idempotent updates, and updates will not arrive out-of-order as both Kafka and the connector provide ordering guarantees for events. The connector that writes to the offline store (Apache Hudi Delta Streamer) ensures writes to the offline store are atomic and any duplicates are removed.