Feature Engineering

What is feature engineering?

Feature engineering is the process of selecting, creating, and transforming raw data into features that can be used as input to machine learning algorithms. Feature engineering should extract signals from the raw data using domain knowledge to create features that capture relevant information and relationships within the data. Well-designed features can significantly improve a model's performance while also requiring less input data than the raw data.

Feature engineering is performed in feature pipelines with model-independent transformations Pandas, Spark, Flink, SQL, and on-demand features using historical data. In contrast, model-dependent transformations, such as feature encoding with Scikit-Learn/Keras/PyTorch, are performed in training/inference pipelines. On-demand features are also computed in online inference pipelines.

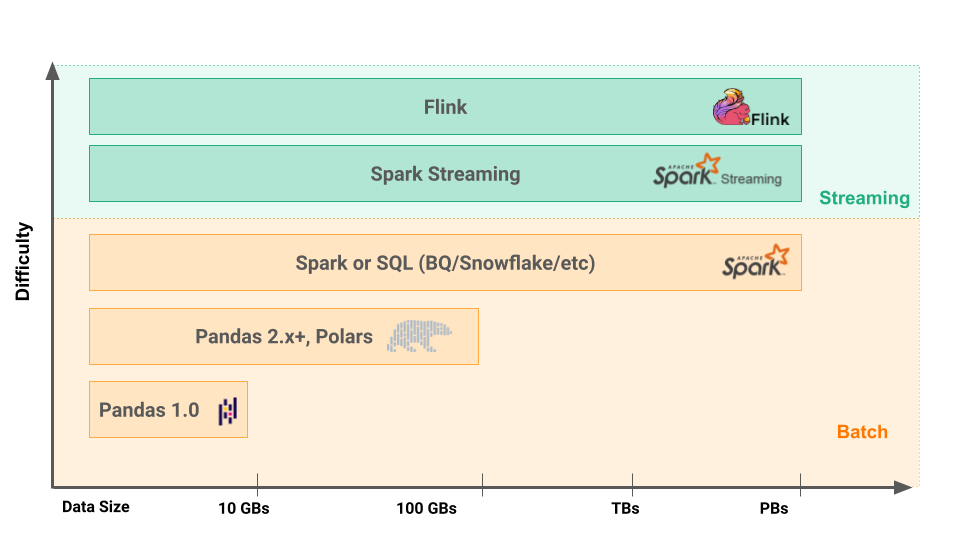

The choice of the most appropriate feature engineering framework for your feature pipeline depends primarily on the data volumes and feature freshness requirements. If each feature pipeline execution processes less than ~100 GBs and does not require very fresh features, you can use Pandas 2.x+ or Polars, which have low development and operational complexity. Spark can be used to process larger data volumes than 100GBs with batch programs. If you need fresh features (from seconds to a few minutes fresh), then you need streaming feature pipelines or on-demand features. Streaming feature pipelines are often implemented in Spark or Flink, where Flink can produce fresher features than Spark as it processes individual events, not micro-batches of events. On-demand features are typically implemented as user-defined functions in either Python or Pandas.